the Creative Commons Attribution 4.0 License.

the Creative Commons Attribution 4.0 License.

Technical note: A framework for causal inference applied to solar radiation and temperature effects on measured levels of gaseous elemental mercury in seawater

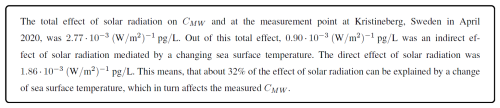

Michelle Nerentorp Mastromonaco

Environmental science usually requires researchers to rely on observational data alone. However, researchers want to identify causal relationships and not only correlations between pollutant behaviour and other environmental factors such as weather. Previously it has been shown that solar radiation associates with the volatilisation and evasion of the hazardous pollutant mercury from sea surfaces into the atmosphere. Statistical and machine learning methods can help find and quantify such associations. However, association does not imply causation, and inferring causal relationships from observational data alone remains a significant challenge. Here, we aim to create an “easy-to-follow” framework, to be used by environmental researchers, for using prior scientific knowledge encoded as graphical causal models to enable causal inference and to estimate effect sizes of different related factors using collected field data. We demonstrate the framework through a case study estimating the effect sizes of solar radiation and sea surface temperature on levels of gaseous elemental mercury (CMW) in seawater measured at the west coast of Sweden. Our causal analysis reveals that 32 % of the total effect of solar radiation on (CMW) is mediated indirectly via changes in sea surface temperature. Wind and instrumentation intrinsic factors biased the estimates by 4.5 %. Results from the case study show that our proposed framework allows for a rigorous design, validation, and reporting of causal inference in environmental science. The framework shows potential in modelling causes of pollutant dynamics and quantifying the effect of regulating policies such as the Minamata Convention on Mercury.

- Article

(13427 KB) - Full-text XML

- BibTeX

- EndNote

Environmental, and particularly atmospheric monitoring and research, rely on direct measurements and modelling to understand the processes involved in the formation, transport, evasion, and deposition of different pollutants. The purpose of environmental monitoring is often defined and driven by national and international legislations and directives, defined within the European Union (European Parliament and Council, 2004), or through international conventions such as the United Nations Framework Convention on Climate Change (United Nations, 1992) and the Convention on Long-Range Transboundary Air Pollution CLRTAP (United Nations Economic Commission for Europe, 2024; United Nations, 1979). Depending on legislative and pollutant, the requirements for monitoring could include either modelling, estimations, or direct and continuous measurements with yearly reporting to co-operative programs under CLRTAP, e.g., the European Monitoring and Evaluation Programme (EMEP, 2023). For mercury, directive 2004/107/EG describes the need of ensuring collecting publicly available information about concentrations in air and deposition in every member state of the European Union (European Parliament and Council, 2004). The directive further demands that indicative measurements of mercury shall be performed at background sites at a spatial resolution of 100 000 km2. Within the United Nations, a global convention, aimed to protect human health and the environment from the exposure of mercury, was agreed on in January 2013 and was named the Minamata Convention on Mercury (United Nations Environment Programme, 2024). The convention entered into force in August 2017 and is today ratified by more than 150 parties all over the world. At present, an Effectiveness Evaluation Group has been selected to assess the effectiveness of the convention by studying trends and changes in mercury concentrations in different media (United Nations Environment Programme, 2024).

This leads to another aspect of environmental monitoring: the use of computer models to analyse and interpret complex environmental data to make predictions about unseen or future data points, to understand patterns and trends, and to evaluate the effectiveness of current legislatives. Learning about the dependencies between variables from observational data allows us to build predictors that provide necessary estimates of previously unseen data. These predictors are statistical learning machines, which can take the form of simple regression models or even highly complex and opaque ML models such as convolutional neural networks (CNNs). However, environmental researchers are not only interested in building opaque, black-box prediction machines that magically predict future data points from observational data. Instead, they are particularly interested in understanding cause-effect relationships to suggest interventions that reduce pollutants in the environment. Causal knowledge, or in other words the analysis of cause-effect relationships, is one of the “fundamental goals of science” (Vowels et al., 2022; Rose and van der Laan, 2011).

Pearl et al. (2016) highlight that causal questions, i.e., question about what are causes and effects, usually cannot be answered from observational data alone. Instead, additional assumptions are needed that specify an assumed causal structure underlying the data-generating process. Causal inference from observational data is therefore not assumption-free. Its conclusions depend on the correctness and completeness of the prior knowledge represented as graphical causal model. Accordingly, the framework presented in this paper does not aim to discover causal structure from data alone, nor does it aim to provide a definitive proof of causation. Instead, its scope is to offer a transparent and principled way to reason about causal effect sizes using observational environmental data and prior knowledge, and to assess the compatibility of that prior knowledge with the observed data. By making prior knowledge and assumptions about cause-and-effect relationships explicit as graphical models, causal conclusions drawn from observational data can be scrutinised, criticised, and revised.

This paper reports the results of a case study on extracting causal knowledge about the contribution of different environmental processes to the observed levels of gaseous elemental mercury (CMW) in seawater. Although measurements of gaseous mercury in water is not yet a requirement within any EU directive, the results from novel continuous measurements of mercury (Hg) in surface water are used as a case study. This paper proposes a framework for obtaining and reporting causal knowledge from environmental observational data. Through the case study, this paper explains how to build statistical models that not only predict future values of an outcome variable but also allow the inference of causal relationships between predictor variables and the outcome using observational data.

1.1 Environmental monitoring of mercury emissions

Mercury is considered by the World Health Organization to be one of the top ten chemicals or substances to be of major concern to public health (Cohen et al., 2005). This volatile and toxic element is found naturally in various environmental compartments and originates from both natural and anthropogenic sources, such as artisanal small scale gold mining, burning of fossil fuels and various industrial activities. As a gas, mercury is stable and has a long residence time in air, resulting in a global spread via the atmosphere to remote, pristine, and vulnerable environments such as the polar regions. Mercury is deposited from air by dry and wet deposition, often via oxidation from Hg0 to HgII. Oxidised mercury in seawater, for example, transforms more easily into methylmercury which can bioaccumulate in aquatic food chains. However, it can also reduce back to its elemental form and evade back to the atmosphere, where it is capable of fast long-range transport. Mercury evasion from sea surfaces accounts for almost 50 % of the annual contributions to the atmospheric mercury load. This is because much of the oceans' surfaces are supersaturated with elemental mercury compared to the atmosphere, resulting in net water-to-air evasion (AMAP, 2021). Understanding the drivers behind formation of dissolved gaseous mercury (DGM) and subsequent flux is key to understand the bioavailability of methylmercury in seawater and supporting global models with information about spatial and temporal variability (Soerensen et al., 2013). Mercury flux models for seawater suggest that the flux, and thus the fluctuation of DGM concentration in surface waters, is mostly influenced by environmental factors such as wind speed, temperature, photochemistry and microbial activity (Soerensen et al., 2013; Johnson, 2010; Kuss et al., 2009). What controls the formation of DGM is also debated, and it is discussed that it is formed by demethylation processes of methyl- and dimethylmercury in the subsurface ocean (Munson et al., 2018). Demethylation could be either abiotic or biotic, with an abiotic process being photo-demethylation controlled by solar radiation (AMAP, 2021). The connection between the formation of DGM and solar radiation has previously been studied in various environmental compartments such as the sea, lakes, soils, and salt marshes (Xie et al., 2019; Sizmur et al., 2017; Dill et al., 2006; Gårdfeldt et al., 2001; Amyot et al., 1997). In several studies, the relationship between DGM and solar radiation was quantified by determining correlation coefficients of 0.66 (Cane Creek Lake, USA; Dill et al., 2006), 0.99 (river near Knobesholm, Sweden; Gårdfeldt et al., 2001), and 0.39 (coastal Minamata Bay, Japan; Marumoto and Imai, 2015), suggesting that solar radiation can be an important, though site-dependent, predictor of DGM. Other studies report similar relationships between mercury evasion and solar radiation with correlation coefficients of 0.7, (Adriatic Sea; Floreani et al., 2019) and 0.5–0.9 (Wuijang River, China; Fu et al., 2013). However, there is probably more to the story of how the concentration of DGM is influenced by external factors such as solar radiation. Sizmur et al. (2017) hypothesised that the formation of DGM probably is affected by a combination of solar radiation and increased temperature. Zhang et al. (2006) performed a Pearson analysis of DGM and various factors measured at Lake St. Pierre (Canada), including air and water temperature, wind speed and solar radiation. The analysis showed significant correlations between DGM and all of these factors. Other aspects, such as water depth, have also been shown to have an effect on how strong the influence of solar radiation is for the formation of DGM in surface seawater, since the correlation mainly has been observed at the coast (Nerentorp Mastromonaco et al., 2017; Fantozzi et al., 2013; Ferrara et al., 2003; Lanzillotta et al., 2002; Andersson et al., 2007). The reason has been suggested to be due to greater vertical and turbulence mixing (Nerentorp Mastromonaco et al., 2017; Lanzillotta et al., 2002), lower friction velocities and surface roughness (Fantozzi et al., 2013), and the presence of dissolved organic carbon (DOC) and suspended particles (Ferrara et al., 2003; Amyot et al., 1997).

In summary, we see that merely calculating and discussing correlations will not capture the underlying causal mechanisms by which different environmental forcings influence DGM. What is needed instead is an approach that can separate correlation from causation while also accounting for cause–effect relationships among the forcings themselves, such as solar radiation influencing temperature.

1.2 Outline and purpose of the paper

The intention of this paper is to provide a discussion of the role of graphical causal models in environmental research and to present suggestions on how effect sizes from observational data in environmental science can be systematically obtained and reported.

In Sect. 2, the paper describes the used case study of continuous measurements of gaseous elemental Hg (CMW) and calculated DGM in seawater, carried out on the west coast of Sweden in 2020. Section 3 introduces a framework for causal inference using observational data. Section 4 then describes how the proposed framework for causal inference is applied to the case data for inferring the effect sizes of different forcing, such as solar radiation, sea surface temperature, wind speed, and speed of the instrument feeding water pump on measured Hg concentrations CMW. Section 5 presents the results from the case study and in Sect. 6 these results and the application of the framework for causal inference using observational environmental data are discussed, leading to concluding remarks and suggestions for further research presented in Sect. 7.

The measurement campaign was conducted between 5 December 2019 and 8 October 2020 at the Kristineberg Marine Research Station, located on the Swedish West-coast (58.25013° N, 11.44485° E). Kristineberg is located at the entry of the Gullmarsfjord in the Skagerrak Sea which is classified as a natural reserve. With its shallow waters it serves as an important reproduction site for shellfish. The data for this study were collected during the period 1 to 25 April 2020, which is an interesting time period for our case study due to the good mixture between dark and sunlit hours in Scandinavia at this time of the year. All data are presented in Table 3 and Fig. 6 in Sect. 5.1.

2.1 Measurements of Hg concentration in surface water

Measuring DGM in water is commonly performed by manually collecting a water sample which is purged using an inert gas, resulting in that gaseous Hg is released and pre-concentrated on an adsorbent trap (typically gold), which is anlalysed with thermal desorption and detection using cold vapour atomic flourescence spectrometry (CVAFS) or cold vapour atomic absorption spectrometry (CVAAS). With manual sampling, DGM is easily calculated by dividing the total amount of Hg captured on the adsorbant trap with the sample volume (Gårdfeldt et al., 2002; Andersson et al., 2008a). However, continuous sampling is often preferable to increase time resolution which is needed for studying the dynamics of DGM in surface waters. Continuous sampling methods are normally divided by two different approaches; one where the aim is to extract all Hg from the continuous inflow of water and a second approach which aims to establish an equilibrium between Hg in the water phase and the gas phase. In the second approach, which is used in this study, the DGM concentration is normally recalculated by dividing the measured Hg concentration in outgoing air by the dimensionless Henry´s law constant that describes the partitioning of mercury between the gaseous and aqueous phase. Henry's law constant for mercury in seawater has previously been determined by Andersson et al. (2008b) to be temperature dependent and is calculated as

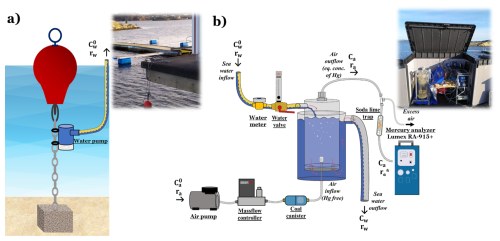

The automatic continuous equilibrium system used in this study, developed by Andersson et al. (2008a) and further used in e.g., Nerentorp Mastromonaco et al. (2017) and in Osterwalder et al. (2020), consist of an inner cylinder in which incoming seawater enters continuously from the top, see Fig. 1b. A purging system, consisting of a glass frit, was installed at the bottom of the inner cylinder. The air used for purging was pumped using an air pump. A massflow controller regulated the air flow (ra) to a fixated 1.5 L min−1. Ambient air normally contains small amount of Hg (ca0 in Fig. 1b), but in our set-up, a coal canister was used to remove Hg from the purging air. After purging through the water in the inner cylinder, the air outflow, now containing the equilibrium concentration of extracted gaseous mercury (CMW), was first led through a soda lime trap and a polytetrafluoroethylene (PTFE, 2 µm pore size, 47 mm diameter) filter to prevent particles and moisture from entering the analyser. The purged sea water flowed out via the bottom of the inner cylinder, moving upwards inside the outer cylinder and was then discharged back to the sea via a rubber tubing. The purpose of the backflow system using an outer cylinder is to serve as isolation to keep the temperature in the inner cylinder as stable as possible during purging. All small tubing in the system was made of FEP (fluorinated ethylene propylene).

Figure 1Experimental set-up for measuring gaseous mercury in surface water: (a) sampling site consisting of a red buoy which holds up a chain that is attached to the bottom with an anchor. The water pump is fixated on the chain about 1 m below the buoy; (b) measurement site containing the continuous equilibrium system for extracting gaseous mercury from seawater and the Lumex RA-914+ mercury analyser.

Finally, the equilibrium concentration of extracted gaseous elemental mercury (CMW) in the air outflow was analysed every minute using a Lumex RA-914+ CVAAS. Calibration of the instrument was performed automatically every three hours by adjusting the baseline using mercury free air. The detection limit of the instrument has previously been determined to be 0.5 ng m−3, having a precision of ±5 % (Nerentorp Mastromonaco et al., 2017). The blank of the automated continuous equilibrium system was determined by turning off the water pump, letting water within the system be depleted of gaseous Hg. The detection limit of the system was determined to 0.32 pg L−1, calculated as three times the standard deviation of the blanks.

CMW measured with the analyser can be used to calculate the concentration of dissolved gaseous mercury (DGM) in incoming seawater. If Ca0 is removed from Hg, the equation to calculate DGM can be simplified to (Andersson et al., 2008a; Gårdfeldt et al., 2002):

where CMW is the measured Hg concentration in the air outflow from the purging system (pg L−1), H′ is the dimensionless Henry's law constant that describes the partitioning of mercury between the gaseous and aqueous phase. The variables rA and rw denote the flow rates of purging air and seawater (L min−1), respectively. When studying Eqs. (1) and (2) it becomes clear that sea water temperature is already integrated into the calculation of DGM, which can cause uncontrollable feedback loops when studying direct effects between DGM and sea surface temperature in our model. To avoid this problem, CMW was chosen as a outcome variable instead of DGM in this study. Calculated DGM concentrations, which in this study only are presented for comparison, are presented in Table 3 and Fig. 6f in Sect. 5.1.

The measurements were conducted in shallow waters (< 10 m depth) at the harbour of Kristineberg. At the sampling site, a commercial 12 V bilge pump1 with the capacity to pump 32 L min−1 was installed on a chain, attached to a buoy and an anchor, at 1 m below the buoy to keep the same water depth to the surface, disregarding tide or waves, see Fig. 1a. A cage of fine mesh net was installed around the pump to reduce pump clogging problems due to algal growth (not shown in Fig. 1a). The seawater was pumped from the sampling site to the measurement site through a rubber tubing, lying on the bottom of the sea. The distance between the sampling site and the measurement site was less than 10 m. At the measurement site (Fig. 1b), a plastic cabinet shielded the equipment from weather and sun. A temperature controlled radiator inside the cabinet kept the equipment at a constant temperature. The flow of incoming seawater from the measuring site was measured and controlled using a water meter and a valve. The regulated water flow in Fig. 1 is denoted rW. A constant water flow was hard to obtain due to the pump experienced problems with growth of algae and clogging, despite installing a protective net. During April 2020, rw varied between 0 and 40 L min−1, with an average flow rate of 2.8 L min−1, see Table 3 and Fig. 6e in Sect. 5.1.

2.2 Measurements of solar radiation and surface water temperature

Solar radiation, denoted as Sol, was measured using a pyranometer sensor from Renke, model RS-TBQ measuring both direct and diffuse solar irradiance with a measurement range of 0–2000 W m−2, a resolution of 1 W m−2 and an accuracy of ±3 %. The sensor was installed on the roof of the measurement box, having no shadowing. The output of the sensor was read by an Arduino embedded computer which transformed the output voltage into irradiance in W m−2 and sent the result to a central computer for logging alongside the other measurements. The temperature of the incoming seawater was measured at the inlet to the automated continuous equilibrium system using a DS18B20 temperature probe connected and processed by the same Arduino embedded computer.

Other weather data, such as wind speed, were downloaded from a weather station situated in the Gullmarsfjord close to the research station. These measurements are run by the University of Gothenburg and are available online (University of Gothenburg, 2024).

All variables have been resampled to 5 min intervals using a moving average filter. This has been done for two reasons: firstly, we did not suspect that the dynamics of the observed effects are faster than 10 min, which is twice the new sampling rate and therefore fulfils the Nyquist–Shannon sampling theorem. Secondly, we reduced the amount of datapoints which allowed for faster processing in the modelling steps. The measurements of solar radiation, seawater temperature and wind speed are presented in Table 3 and Fig. 6a, b and d, respectively.

The workflow to estimate effect sizes in this study is presented as a framework for researchers conducting causal inference from observational data. It builds on existing causal inference workflows proposed in behavioural science (Deffner et al., 2022) and software engineering (Furia et al., 2024). However, unlike these domains, environmental research relies to a much higher degree on data from intervention-free observational studies, motivating a tailored framework for causal inference using observational environmental data and prior scientific knowledge from researchers. Here, causal relationships are not discovered from the observational data itself, but are assumed based on prior experimental and scientific knowledge, such as laboratory studies demonstrating photochemical DGM production under controlled conditions. The scope of the proposed framework is then to quantify how multiple established or assumed causal processes jointly contribute to observed variability under natural, intervention-free field conditions. Using causal models, as suggested in this framework, conceptually allows individual causal pathways to be “switched off” within the model. This allows assessing the causal pathways' relative contribution without the need to physically intervene in the environmental system, which often is impossible in field observation.

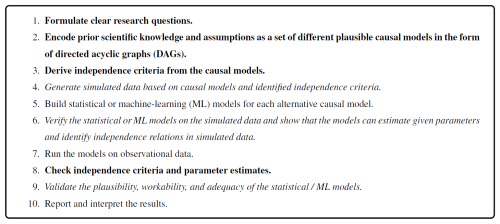

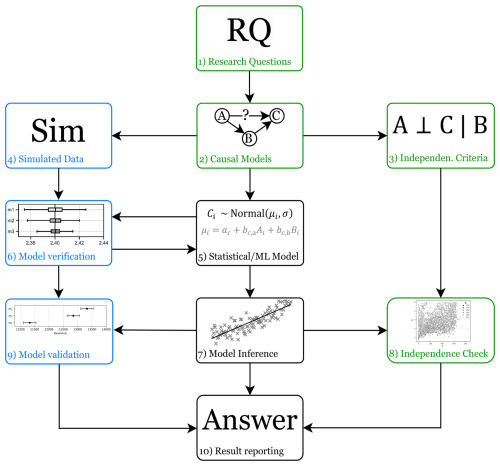

The proposed framework consists of ten steps which can be divided into three groups: steps for incorporating and testing prior knowledge (steps 1, 2, 3, and 8), steps for collecting evidence of plausibility, workability, and adequacy (steps 4, 6, and 9), and steps for generating answers to research questions (steps 5, 7, and 10). The steps are interlinked, which suggests an order in which we propose to execute them as shown in Fig. 2.

Figure 2A workflow for causal inference of statistical models using observational data and prior knowledge about plausible cause-effect relationships.

In steps 1–3, research questions are formulated, and prior scientific knowledge and assumptions are encoded in a set of possible graphical causal models. A causal model describes “relevant features of the world and how they interact with each other” (Pearl et al., 2016). Which features are relevant depends on the research questions and scope of the investigation. A causal model indicates how a change in one variable changes the value of another variable, regardless of the functional form of the relationship. Causal models include two types of variables. The first type, exogenous variables, are not explained further within the model. For example, solar radiation Sol affects ocean surface temperature TS (Sol → TS). Here, we treat Sol as an exogenous variable without modelling upstream mechanisms such as the sun’s fusion reactions. The second type, endogenous variables, by contrast, are always descendants of at least one exogenous variable. Directed Acyclic Graphs (DAGs) provide an accessible visualisation of causal models with nodes representing the variables of a system of interest and directed edges representing the direction of cause-and-effect. The causal model of the earlier example Sol → TS is in the form of a DAG, with the arrow → indicating that solar radiation is a cause of changes in surface temperature, and not the other way around. Note that in this framework, causal arrows are not inferred from data but represent a priori assumptions about cause-effect directions derived from domain knowledge or experimental evidence. The graphical representation of causal models through DAGs is qualitative, i.e., it provides information about the direction of cause-and-effects between variables, but it does not provide information about the strength or functional properties of the causal relationships. Causal inference, as proposed in this framework, provides the quantification of effect sizes and it evaluates whether the observational data is consistent with the assumed qualitative causal structure. Pearce and Lawlor (2016) provide an overview of properties of DAGs representing causal models:

-

DAGs are directed, i.e., the arrows can only have a single head pointing from the cause towards the effect.

-

DAGs are acyclic, meaning that there must not be a series of arrows (i.e., a path) resulting in a cyclic path. Causal models are acyclic because a variable at a given point in time cannot be the cause of itself.

-

An arrow indicates that (we assume) a causal relationship exists between two variables, where the first variable has an effect on the second variable.

-

The absence of an arrow indicates that the values of two variables are not causally related, i.e., a change in the value of one variable has no effect on the value of the second variable.

-

The outcome variable is typically an endogenous variable which is the target of an investigation and effect of interest which value is influenced by parent variables of the causal system.

-

The exposure variables represent causes of interest. They are usually nodes with outgoing edges. Exposure variables are exogenous variables in a causal model if they have only outgoing edges. Otherwise, they are endogenous variables which value are being influenced by other variables, such as for example sensor noise effects. Exogenous variables are always root nodes in DAGs, i.e., they do not have incoming edges.

A key function of the graphical causal model is to make prior assumptions explicit. By explicitly encoding the researchers' prior causal knowledge as DAG they become open to criticism and possible later refinement. Furthermore, it is necessary to define the direction of cause-and-effect a priori, because statistical models cannot distinguish between cause and effect as they only identify association but not causation. If the direction of cause and effect is not known, or if the existence of a causal relationship is uncertain a priori, several alternative causal models can be proposed. Based on the proposed causal models, independence criteria are derived using mathematical methods such as d-separation (Pearl et al., 2016). These independence criteria derived from the assumed causal model can later be used to empirically validate the plausibility of the DAG against the observed data by checking for expected associations, or the lack thereof. Causal relations are not discovered from the data directly but evaluated by assessing whether the observed data are consistent with the independence relations implied by the a priori defined causal models. This concept is referred to as the faithfulness assumption, i.e., that the observed data follows the independence criteria suggested in the assumed causal graph (Spirtes et al., 2000). Tools exist, such as DAGitty (Textor et al., 2016) that automatically derive these independence criteria from graphical causal models.

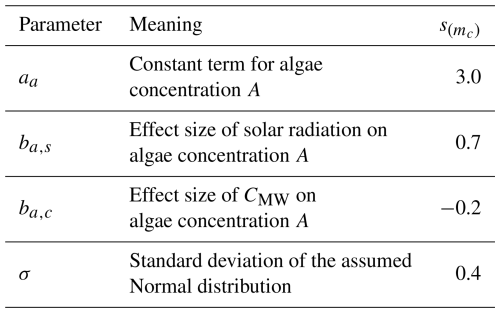

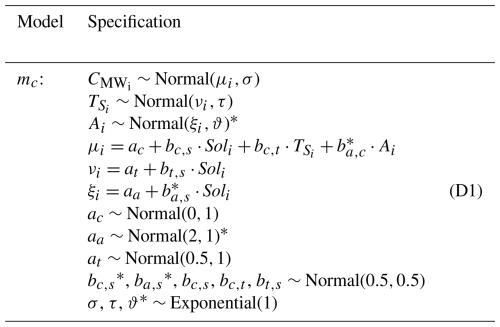

In Step 4, simulated data are generated for each of the proposed causal models. These simulations emulate the suggested causal relationships to create artificial outcome variable data. Then, in Step 5, the statistical models or ML models are defined. The prior knowledge of causal relationships can be used to define the statistical models accordingly, which may result in several different possible statistical models representing different prior assumptions. Some statistical models, such as generalised linear models (GLMs) for Bayesian data analysis (BDA), allow the explicit inclusion of additional a priori knowledge for example through the selection of predictor variables or the choice of prior distributions. In Step 6, the correctness of the models is verified on simulated data. Each model is verified against each of the simulated data sets, where each data set represents one of the possible causal models. It is tested whether the models can correctly represent prior knowledge (e.g., through prior predictive plots) and whether the models can infer the effect sizes that were set to generate the simulated outcome data. This not only tests whether the models work as intended, but also how the different models behave under different assumed causal relationships.

The models are run on the observed data in Step 7. Then, in Step 8, the inferred parameters are used, to check for statistical independence between variables. This can be accompanied by manual independence checks such as scatter plots. The results of the independence check are compared with the previously established independence criteria to provide evidence for a final candidate model. Step 9 then aims to collect evidence for the plausibility, workability, and adequacy of the models. Plausibility can be established by checking that the defining parameters and settings of the models, such as the choice of a-priori distributions, follow the prior knowledge of the researchers. Workability can be assessed by checking that the models can infer results without encountering computational problems, such as numerical difficulties or divergences. Adequacy of the models can be established by inspecting posterior predictive plots and checking that the models can infer the outcome variable reasonably well. The different proposed models can be compared in terms of predictive capability (e.g., using divergence measures). However, the model with the highest predictive power is not necessarily the model that allows for the extraction of correct causal knowledge from the estimated parameters which is further discussed as supplementary material in Appendix D.

In Step 10, a causal knowledge is extracted from the statistical models and possible answers to the research questions are given. In addition to the outcome of the model inference itself, the answer should also report on the validation results of the models, the outcome of the independence checks, and the included prior assumptions and knowledge encoded in the causal models. In the analysis and discussion, we have assumed that the regression coefficients can be interpreted as effect sizes. This assumption requires the use of linear models2 and it requires that the underlying causal model is correct. The latter is the reason why it is important that prior knowledge and assumptions in the form of a causal model are communicated as part of the research answer. Without knowing the underlying assumed causal model, it is impossible to judge whether the regression coefficients can be correctly interpreted as effect sizes. It is a combination of all the elements in this framework that allow for the extraction of causal knowledge from the inference results: (a) prior knowledge and assumptions, (b) simulation, verification, and validation outcomes, and (c) inference results from the models.

In this section we explore the use of the proposed framework for causal inference using observational data in environmental research described in Sect. 3 by following workflow outlined in Fig. 2 using observational data from the case study described in Sect. 2.

4.1 Software and implementation

All steps of the framework were implemented using open-source software. DAGs and implied conditional independence relations were derived using DAGitty (Textor et al., 2016). Bayesian statistical models were specified in R with the rethinking package (McElreath, 2020) and Stan (Stan Development Team, 2024) as underlying inference engine. Data preprocessing and visualisations were performed in both R and Python using standard specific libraries. No commercial causal inference software or simulation software was used. All code and data required to reproduce the steps of the causal framework are provided in the replication package accompanying this manuscript.

4.1.1 Step 1: Formulate clear research questions

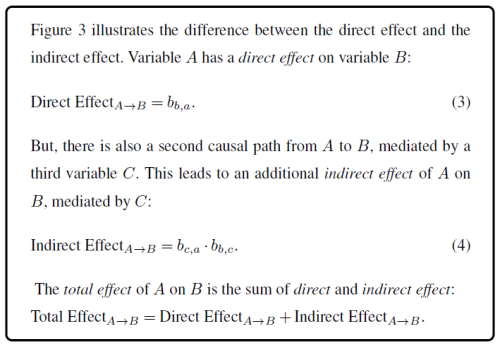

The observed association between two variables may be the result of several competing causal relationships between other observed and unobserved variables. Researchers may then be interested in identifying the direct effect and the total effect between an observed variable and an observed outcome. The difference is that the direct effect quantifies only the part of the association that relates to a direct influence of the variable of interest on the outcome. The total effect, however, also includes associations that are due to indirect effects which, for example, are mediated by other variables.

Causal models allow us to distinguish between a direct effect, which includes the part of the total effect of a forcing that acts immediately on an outcome, and the indirect effect, which accounts for the share of the effect size that is mediated through another factor. In other words, the distinction between direct and indirect effects is defined with respect to an a priori assumed causal graph. With such a graph, our frameworks estimates the magnitude of these effects conditional on the specified causal paths which usually cannot be identified from observational data alone.

Earlier studies investigating the correlations between DGM concentration, solar radiation and temperature relied on discrete sampling campaigns with limited temporal resolution (Amyot et al., 1997; Gårdfeldt et al., 2001; Dill et al., 2006). However, solar radiation varies on a timescale of minutes, whereas sea surface temperature responds more slowly due to the thermal inertia of water. Low-frequency data collapse these distinct timescales which makes the variables statistically collinear and inseparable. Therefore, in order to separate the direct effect of solar radiation on mercury concentrations from the indirect effects mediated by sea surface temperature, the data must contain sufficient variability in both the exposure and the mediator. Also, since sea surface temperature already is used to calculate DGM (see Eqs. 1 and 2), CMW was chosen as outcome variable instead of DGM in this study. This study provides data with high temporal resolution from automated long-term measurements of gaseous Hg concentration, solar radiation and surface seawater temperature. By using causal modelling, this study extends prior correlation-based research by quantifying the direct and indirect effect sizes of solar radiation on Hg concentration in seawater.

The presence of additional factors can influence one or more predictor variables and the outcome at the same time can lead to confounding and bias in the estimated effect size between a predictor variable and the outcome. In our observational study, we considered wind, measured as wind speed W as potential confounding factors and the speed of the water pump, measured as rW, as disturbing instrument-intrinsic factor. We expected wind to affect CMW by diffusing mercury and to affect TS through heat transfer and evaporation of water. Furthermore, we know that a fluctuating pump speed can influence the measured Hg concentration CMW. We also assume that the pump speed can be influenced by solar radiation and temperature (e.g., through the growth of algae that clog the pump inlet) and by wind which causes waves that can affect the inlet of the pump. The identification of these confounding and disturbing factors led to the development of an additional model that was also tested in this study. This was done to identify how important they are to our measured CMW and how they would affect the desired study of association between Sol and CMW. Ultimately, this study aims to use causal and statistical modelling to infer the effect sizes of different forcings, such as solar radiation, sea surface temperature, wind speed, and water flow on CMW.

4.1.2 Step 2: Encode prior scientific knowledge and assumptions as a set of different plausible causal models in the form of DAGs

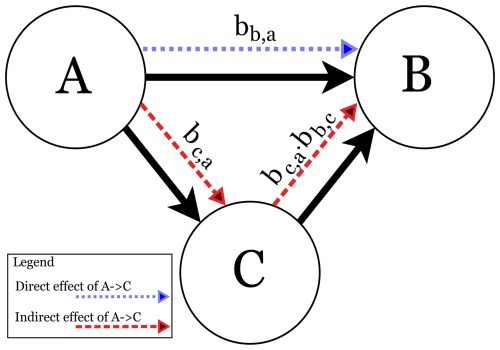

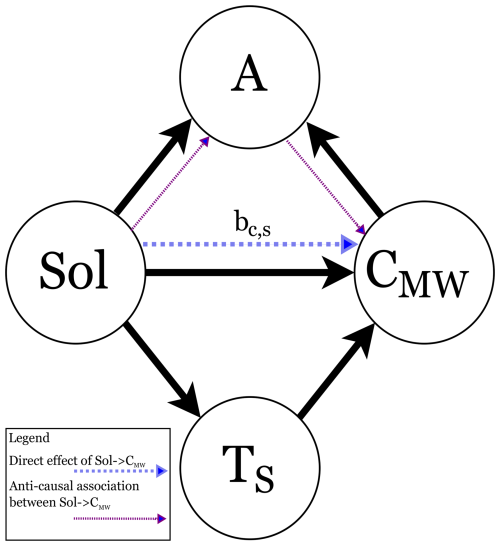

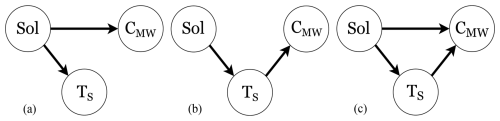

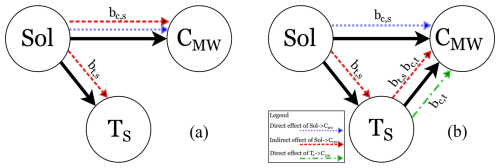

In this study, we intended to identify the effect of solar radiation Sol on the measured Hg concentration CMW. Figure 4 provides an overview of three plausible models of the cause-effect relationships between the variables in this observational study, given the assumptions that Sol always affects TS.

Figure 4Directed Acyclic Graphs (DAGs), which encode assumptions about causal relationships between variables in the dataset.Sol: solar radiation; Ts: surface temperature of water; CMW: Hg concentration. (a) Model m1 representing an effect of Sol on CMW and on TS. (b) Model m2 representing an effect of Sol on CMW mediated by TS. (c) Model m3 representing an effect of Sol on Ts and CMW and an effect of Sol on TS.

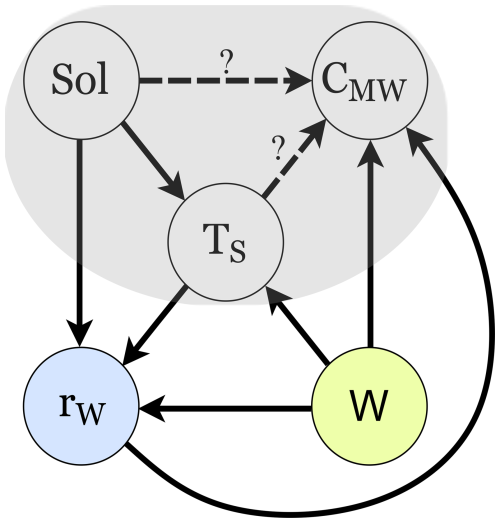

The identification of the confounding factor wind speed W and the disturbing instrument-intrinsic factor water pump speed rW resulted in the development of a fourth model, m4, presented in Fig. 5.

Figure 5Model m4 extending models m1 − m3 with external factors W representing wind and rW representing water pump speed.

We first had to investigate which of the “base” models m1 − m3 is most plausible given the data. Then, we included the external factors in m4 and estimated if the influence of the external factors is so strong that they could compromise our choice of “base” model3

4.1.3 Step 3: Derive independence criteria from the causal models

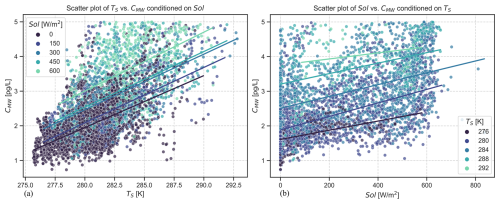

The DAGs shown in Fig. 4 provide information about plausible cause-and-effect relations and expected associations between the variables of interest. We expect an association between two variables if there is a cause-effect relationship between them4. However, association alone does not provide information about the direction of cause and effect. If we find an association between solar radiation (Sol) and surface temperature (TS) in the data, we assume from experience that it is the sun radiation that causes the change in surface temperature. On the other hand, the absence of an arrow between two variables suggests that there should be no association in the data. In the DAG depicted in Fig. 4a, the solar radiation acts as common cause on the measured Hg concentration (CMW) and the water surface temperature. Because of Sol acting as common cause, we will see a spurious correlation between CMW and TS in the data. This is called confounding and, in the DAG of Fig. 4a, Sol is therefore a confounding variable. Association can flow “anti-causally”, i.e., against the direction of cause-effect, from TS to Sol and from there in the direction of cause to CMW. Pearl et Mackenzie describe this as “association that flows in any direction along the edges” (Pearl and Mackenzie, 2018). However, the flow of association between CMW and TS can be blocked by conditioning on Sol. Then, given that we condition the data on Sol, TS and CMW become independent.

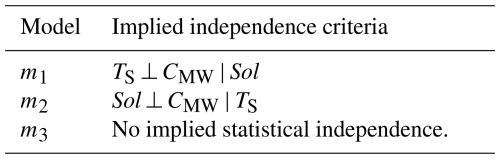

However, many researchers encounter the problem that a number of different causal models are possible given the correlations seen in the data. In our case, assuming that there is correlation between Sol, TS, and CMW, any of the cause models shown in Fig. 4 is plausible. There are two approaches that combined can help us identify the correct causal model: prior scientific knowledge and testing independence criteria. In fact, the causal models already include prior scientific knowledge by assuming the directions of cause and effect because there is no point in adding other compatible models in which the direction of cause and effect is obviously wrong, for example by assuming a change in TS could causes a change in Sol. Each DAG in Fig. 4 suggests a set of conditional independence criteria for the research problem that can be used to validate or invalidate the assumptions about cause-effect relationships. Table 1 provides the implied independence criteria for the DAGs of Fig. 4. For our study, we used two approaches to test independence, namely visual inspection of scatterplots and regression analysis. We expected the coefficients of the independent variables to be close to zero if the independence assumption holds. Non-parametric measures of association, such as the Spearman's rank correlation coefficient are not well suited to testing conditional independence between three continuous variables because these measures typically assess association, not independence, between only two continuous variables.

Table 1Implied statistical independence criteria based on assumed causal relationships in Fig. 4.

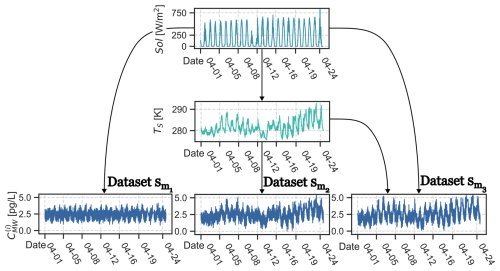

4.1.4 Step 4: Generate simulated data based on causal models and identified independence criteria

Simulated data were generated for each of the proposed causal models. Each simulated dataset was generated from a data-generating process using forward-sampling with fixed parameter values that reflect the causal assumptions encoded in the DAGs. As software, we used R with the rethinking package and Stan as underlying inference engine to implement the generative models. The simulations serve as a verification step to test if the statistical models can recover known parameters under assumed causal structure. They do not serve as a substitute for inference on observational data. Further details and results of the simulation are presented as supplementary material in Appendix B.

4.1.5 Step 5: Build statistical or machine-learning (ML) models for each alternative causal model

Statistical modelling

The aim of the statistical modelling for this research was to explore the causal relationships between the variables in the dataset. Initially, we built three Bayesian generalised linear regression models (GLMs) based on the causal models of Fig. 4. GLMs are a flexible family of statistical models widely used in empirical science (Furia et al., 2019; Gelman et al., 2020). They extend linear regression methods by introducing link functions and data type specific probability distributions which provides more flexible and accurate modelling of relations between variables in the dataset. We applied Bayesian data analysis (BDA), in combination with linear regression models, as a simple form of iterative ML. It is iterative in the sense that availability of new data in BDA allows for an update, not a complete recalculation, of the inference results.

We followed a mathematical description of the statistical model which is standard in statistical journals and outlined in three steps in Chap. 4.2 of McElreath (2020):

-

Data are represented as variables. Unobservable characteristics like averages, rates, etc. are defined as parameters. Each variable is either defined through other variables or probability distributions. Initial probability distributions assigned to the parameters are called priors.

-

The combination of variables, parameters, and their probability distributions form a joint generative model which can be used to analyse relations between variables, estimate parameters, infer unobserved variables, and predict future observations.

-

All predictor variables are standardised to avoid numerical problems due to large values and to allow for negative values (Marquardt, 1980). Standardisation means that all values are centred on zero and that the data have a standard deviation of one. This approach is also known as z-score normalisation.

After we identified model m3 as most appropriate (see Sect. 5.2), we extended it to model m4, shown in Fig. 5, by introducing the external confounding element wind W and the instrument-intrinsic factor pump speed rW.

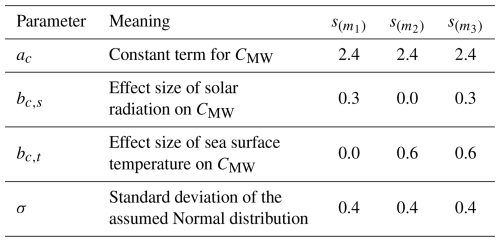

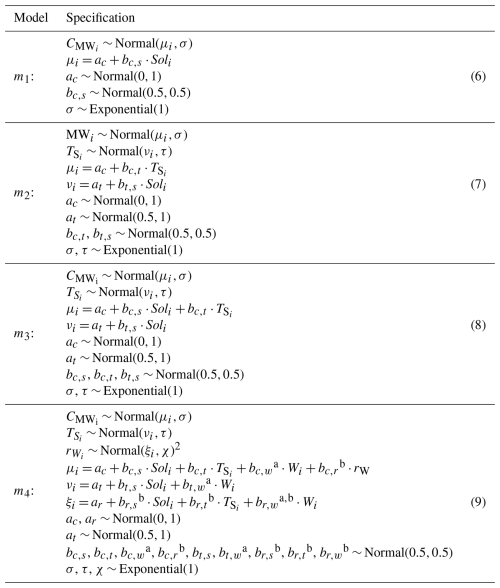

The mathematical definition of the models are listed in Table 2.

Table 2Model specifications.

a Terms that relate to the wind speed W. b Terms that were relate to the speed-pump rw.

The four resulting statistical models for predicting CMW differ due to different assumptions about cause-effect relationships as shown in Figs. 4 and 5. However, the models share a common structure: they contain definitions of the likelihood that define probability distributions of the outcome (in our case the measured CMW) given a set of parameters that define this probability distribution, for example through the mean μ and standard deviation σ for Normal distributions. Models m2 and m3 contain two likelihoods because in both models Sol affects the surface temperature Ts which then affects the measured CMW. Therefore, we needed to define two linked regression models, one for Ts with Sol as the predictor variable, and one for CMW with Ts as the predictor variable for model m2, and both Sol and Ts as the predictor variables for model m3. The parameters of the likelihood are declared in the form of either a deterministic function assignment (denoted through =) or stochastic relations (denoted through ∼), i.e., a mapping of a variable or parameter onto a distribution. When a parameter (e.g., b(c,t)) is mapped onto a distribution (e.g., the Normal distribution), this distribution is then referred to as a prior distribution.

Variables

There are four main variables in models: represents the measured Hg concentration, associated via Eq. (2) to DGM. It is chosen as outcome variable for all models. The index i denotes an individual data frame in the total dataset. represents the surface temperature of the water and is a predictor variable in models m2 − m3. Soli is another predictor variable in all models and represents solar radiation. In models m2 and m3, Soli is also a predictor for . The additional model m4 includes the confounding variable W and instrument-intrinsic factor rW. The biasing influence of these variables on our effect size estimates can be compensated for by including them in the prediction model for and . In this way, we “block” any anti-causal flow of association between TS and CMW via W and rW. The model m4 including the external influences also includes parameters describing the effect size of these external influencing factors W and rw: bc,w and bc,r represents the effect size between CMW and W, respectively rW, bt,w and bc,t between TS and W, respectively rW, br,s between Sol and rW, and br,w between W and rW. The parameter ξ represents the estimated standard variation for the rW data.

Likelihood

The role of the likelihood is to link the observed data to the distributions of the inferred model parameters (Furia et al., 2019). The likelihood provides a distribution function of how well the model explains the observed data given an inferred set of distributions for the model parameters. We assumed that the observed data can be represented through a Normal distribution because the central limit theorem states that the distribution of the sum of a large number of independent processes approaches a Normal distribution and environmental phenomena typically involve the aggregation of a large number of underlying processes. Furthermore, we had no reason to assume another distribution for the outcome CMW. However, Appendix G discusses the alternative choice of a log-normal likelihood for CMW that can often be appropriate for environmental data. As part of the GLM, the likelihood distribution is further specified through a linear model, i.e., the mean (e.g., μ is constructed through a linear combination of other parameters (e.g., ac + bc,s) and predictor variables (e.g., Soli). This link function between the likelihood and the parameter distribution of the model can take any functional relation based on the prior knowledge of the researcher. We decided to use a linear model because we do not have any prior knowledge that would justify the use of any other functional form, and it represents the most conventional functional relation between an outcome and its predictor variables. Bayes’ rule is then used to iteratively calculate a posterior distribution for the parameters of the model given the prior distributions, the observed data, and considering the likelihood, i.e., how well the currently assumed parameters explain the data:

Priors

In general, a prior tells researchers what assumptions are made about a parameter before they see any observed data. These assumptions can range from highly informative, where the distribution encodes strong prior beliefs about the parameter values, through weakly informative priors that provide mild regularisation, to non-informative priors that have very little influence on the posterior distribution. It is important to note that priors are continuously updated with the available observed data. With each iteration in BDA, the posterior will be used as new prior for the next iteration. That means, that the more data is available, the less influence prior beliefs have. With each iterative update, the prior distribution will be more influenced by the data distribution and therefore become increasingly dominated by the likelihood. In BDA, weakly informative priors are preferred in applied regression modelling because they provide mild regularisation (Lemoine, 2019). This prevents, for example, extreme parameter values, while at the same time allowing the data to shape the posterior distribution.

For the models of this study, we used weakly informative priors for all parameters. Because all predictor variables were standardised, such that the coefficients represent effects on a common scale, we used Normal priors with modest location and scale parameters that encode a coarse, “order-of-magnitude” expectation about plausible effect sizes and allowing both positive and negative effect sizes. Furthermore, the Normal distribution can represent a wide range of shapes from perfectly symmetric to slightly skewed which makes it a suitable choice if no other strong information is available about the shape of the prior distribution. We assumed exponential distributions for the parameters related to the variances because these must always be positive. The plausibility of the priors was assessed using prior predictive simulations for our models m1 to m4, which are presented as supplementary material in Appendix C.

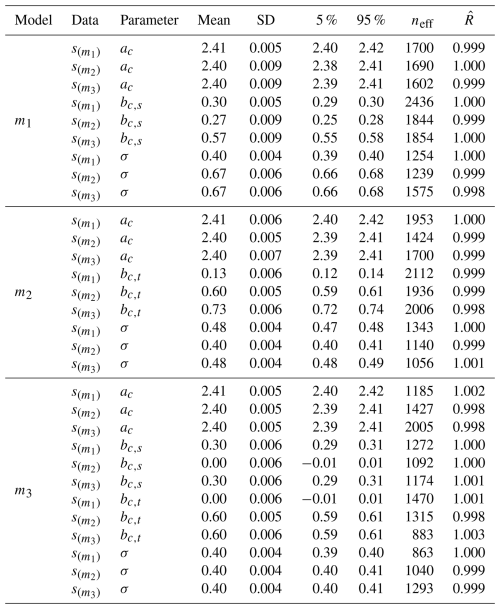

4.1.6 Step 6: Verify the models on the simulated data

In this step we show that the models can estimate the parameters set for the simulation and identify independence relations in simulated data. Each model was verified on the simulated data sets created in Step 4 by comparing the posterior parameter estimates with the known parameter values used in the data-generating process. The verification was considered successful if the posterior means recovered the true parameter values and if parameters corresponding to absent causal paths were estimated close to zero. The results of the parameter estimates for all models under simulated data are given in Appendix B.

4.1.7 Step 7: Run the models on observational data

Inferring data from the models means predicting the distributions of the parameters as well as predicting the posterior distributions of the outcome (i.e., CMW in all models) given the observed data for TS, Sol, CMW, and in case of model m4 the external influences W and rW. We applied a stochastic process known as Markov Chain Monte Carlo (MCMC) to find the posterior distribution. MCMC is considered a standard approach for Bayesian Data Analysis and an introduction to the technique is available in Brooks et al. (2011). In brief, MCMC works by running a Markov chain that samples data from posterior distribution. In a Markov chain, the next state only depends on the current state and not on any other states before that. Different techniques, such as Hamiltonian Monte Carlo (HMC), can be applied to find the next state in the Markov chain. In HMC, the next state is not determined purely at random, but instead through the gradient of the posterior distribution which allows an adaptive step size for each new state. To find the posterior distributions for the parameter and outcome using MCMC with HMC, we used the R-packages rethinking (McElreath, 2023) and Rstan (Stan Development Team, 2024).

4.1.8 Step 8: Checking of independence criteria and parameter estimates

Initially, scatter plots were created to check for statistical independence between the variables. These are presented and discussed as supplementary material in the Appendix E. Furthermore, the parameter estimates can be used to identify statistical independence between variables, which is discussed as part of the results in Sect. 5.2. The results of the independence check were compared with the established independence criteria to provide evidence for a final candidate model.

4.1.9 Step 9: Validating the plausibility, workability, and adequacy of the models

Furia et al. (2022) recommend validating three characteristics of statistical models for BDA: The models must be plausible, workable, and adequate.

Plausibility

Plausibility can be achieved by checking that the model is “consistent with (expert) knowledge about the data domain” (Furia et al., 2022). The key aspect here is that the models should include reasonable priors that they are consistent with expert knowledge and that are neither too permissive nor too constraining, especially in the case of limited available data (McElreath, 2020). The plausibility of the priors can be checked using prior predictive simulations which we provide as supplementary material in Appendix C.

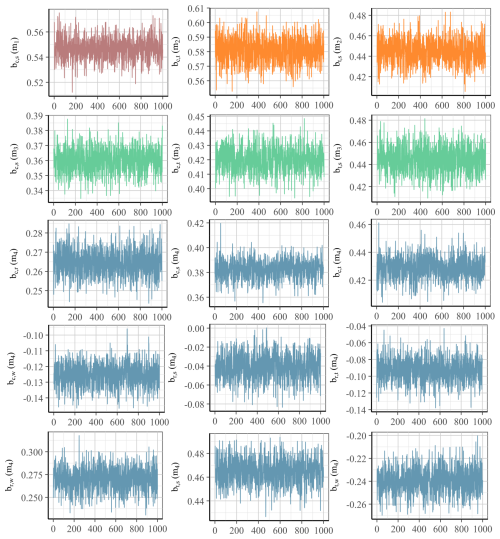

Workability

Second, the models must be workable, i.e., it must be computationally possible to fit the models to the provided data without, for example, encountering numerical difficulties. Numerical difficulties can arise from multicollinearity (Furia et al., 2022): if two variables in the data are very strongly correlated, the model cannot determine the ratio of contribution to the outcome between the two variables and we cannot determine the effect size for each of the variables. To check the workability of a model, the sampling process can be independently repeated a few times and the similarity between each sampling process can be estimated through the ratio of within-to-between chain variance . An -value close to 1 indicates a stationary posterior distribution which is desirable. Another metric that provides an indication of the workability of the model is the effective sample size. It indicates the size of samples that are not autocorrelated, i.e., it measures how much information is lost due to information redundancy between samples. A recommendation is that the effective sample size should be at least 10 % for each estimated parameter (Furia et al., 2022). Other indicators of numerical problems are divergent transition warnings that statistical toolboxes such as rethinking or the underlying Stan library may raise when evaluating the posterior distributions of the models. As part of the model validation for the proposed models we provide the -values and effective sample sizes for each model together with the detailed inference results in Appendix F. We also checked for warnings of divergent transitions while training the models on the data. A detailed discussion of the convergence assessment, including visual trace plots for the effect size parameters, can be found in Appendix H.

Adequacy

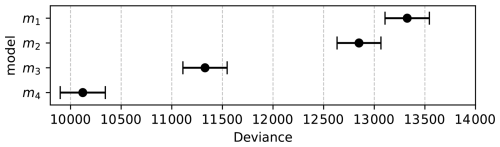

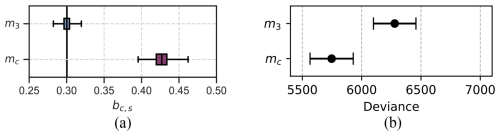

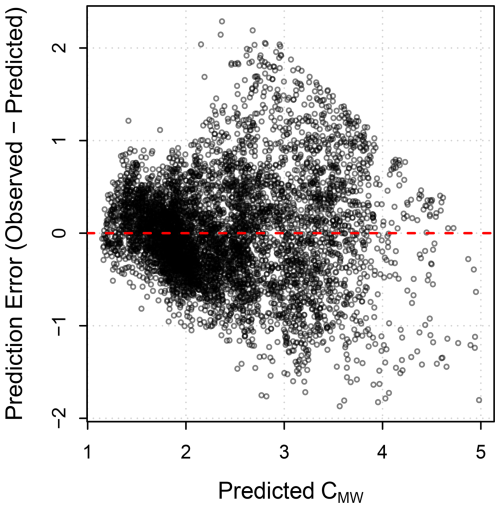

A final aspect of model validation is that the models must be adequate for the problem under investigation. An adequate model can generate data that are similar to the observational data (Furia et al., 2022). Adequacy can be tested by comparing the posterior predictive plots with the empirical data. A posterior predictive plot visualises the posterior predictive distribution which is obtained by evaluating the posterior distributions of the likelihood parameters, in our case μ and σ, and then sampling from the resulting probability distribution. We provide posterior predictive plots for each model as part of the model evaluation in Fig. 11. Statistical models can also be compared to each other using information criteria. The Watanabe-Akaike Information Criterion (WAIC) is an example of an information criterion commonly used for relative comparison of statistical models (Watanabe and Opper, 2010). It approximates the relative out-of-sample Kullback-Leibler (KL) divergence, i.e., it tells us something about the relative “statistical distance” of a model from the data. Because information criteria rely in some way on the KL divergence, which is not a metric but a relative measure, WAIC, and most other information criteria, are also relative measures of the adequacy of the models. They do not provide an absolute metric that can be used to determine the absolute adequacy of an individual model. Instead, information criteria such as WAIC can be used to compare models against each other. We provide WAIC scores for all models as part of the model evaluation in Sect. 5.3 and in Fig. 12. Wheras WAIC provides an expected out-of-sample predictive accuracy estimate, the coefficient of determination R2 summarises in-sample explanatory fits. In this analysis, WAIC and R2 are consistent for the models, but if they were to diverge, it would indicate that a model either fits the observed data well but generalises poorly, or generalises well but shows a reduced in-sample fit.

5.1 Measured data

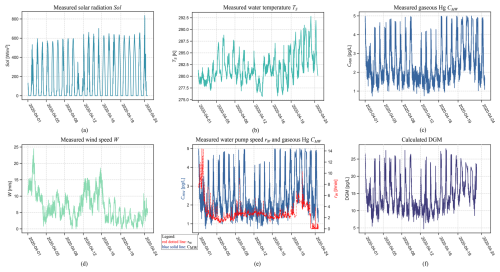

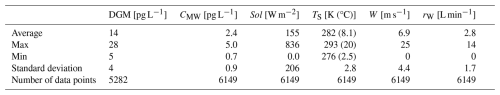

The data used for this study were collected between 1 April 2020 (13:20 UTC+1) and April 25th 2020 (02:29 UTC+1). Figure 6 and Table 3 summarise and present the observed data. The data have a coverage rate of 92 % for all measured parameters with 6149 valid data points. The month of April was chosen due to the good data coverage, which makes it already visually possible to see some co-variations between the variables in the data.

Table 3Summary statistics of calculated DGM and the measured parameters CMW, solar radiation (Sol), surface water temperature (TS), windspeed (W), and speed of the water pump (rW) during April 2020.

The measured CMW in Fig. 6c show clear diurnal patterns with peaking concentrations during daytime and lower concentrations during night-time. This coincides with the measured solar radiation shown in Fig. 6a. Solar radiation shows clear diurnal patterns with higher values during the day and lower values during the night, as to be expected. It is even possible to see in the patterns of the data that 11 April was a cloudy day, as less radiation was measured at noon. This explains why the peak in measured CMW was lower at this time. The measured surface water temperature, plotted in Fig. 6b, also shows variations with higher temperatures during the day and lower temperatures during the night, suggesting an association between solar radiation and surface water temperature. In Fig. 6d we also plotted CMW to show the distinct covariation of the pump speed rW and the measured Hg concentration CMW. Calculated DGM, shown in Fig. 6f, show similar diurnal patterns as for CMW. The average concentration during the measurement period was 14 pg L−1 (Table 3). During the summers in 1997 and 1998, Gårdfeldt et al. (2001) measured DGM by manual sampling at 20 cm depth in open seawater, about 1 km from the Kristineberg Marine Research Station, resulting in DGM concentrations varying between 40 and 100 pg L−1. However, it differs about 20 years between their and our measurements. More recent continuous measurements of DGM, performed in spring 2015 at the Råö/Rörvik station in Sweden (about 160 km south of Kristineberg), showed an average DGM surface concentration of 13 pg L−1 (Mastromonaco, 2016), which is in good agreement with our study. The literature review presented in Mastromonaco et al. (2017) show surface DGM concentrations varying between 11 and 32 pg L−1 in the Baltic Sea (15–20 pg L−1 in spring), 11–52 pg L−1 in the North Sea, 12 pg L−1 in the North Atlantic Ocean (summer) and about 20–30 pg L−1 in the Mediterranean Sea.

5.2 Parameter estimates and causal inference

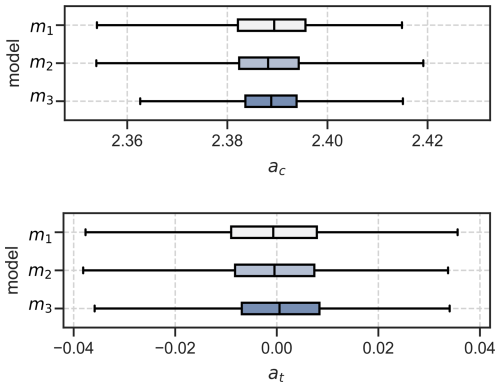

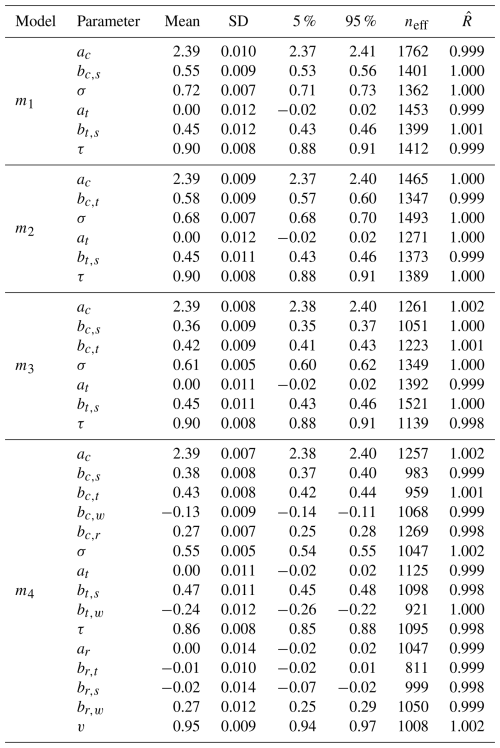

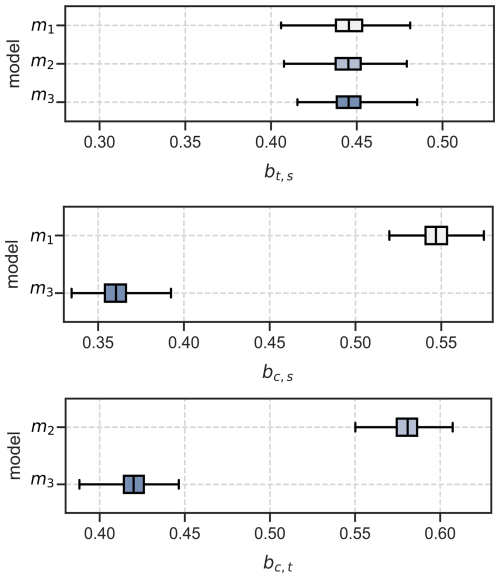

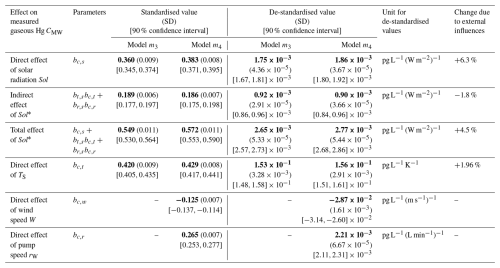

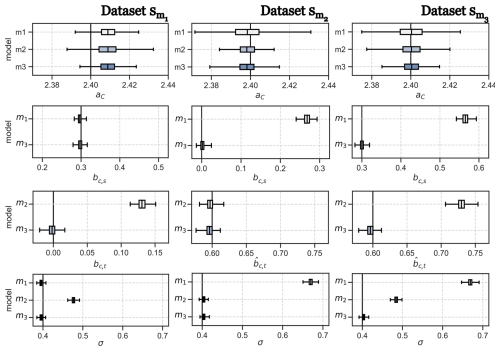

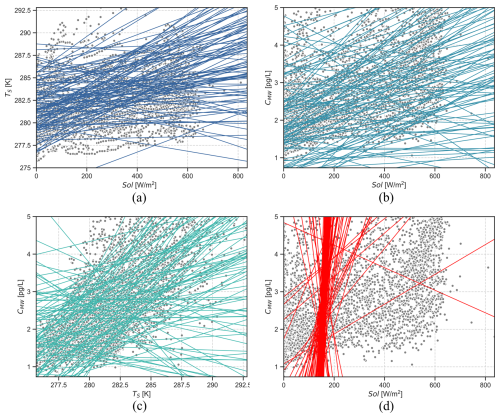

After fitting the models to the observed data, we obtained posterior distributions for each parameter of the models. Figure 7 shows the estimates for the constant parameters aC and aT of the models listed in Table 2. The first parameter aC is the constant offset in the Sol → CMW ← TS part of the models. It is approximately equal to the mean of the CMW values in data which is 2.4 pg L−1. The estimate for aT is close to zero for all models because we have standardised the predictor parameters Sol and TS. Figure 8 provides the estimates for the coefficients of effect sizes bt,s, bc,s, and bc,t. These estimates are important to answer what the direct and indirect effect of solar radiation on Hg concentration are (RQ1).

Figure 8Coefficient estimates for the effect size of solar radiation on temperature (bt,s), the effect size of solar radiation on Hg concentration (bc,s), and the effect size of sea surface temperature on Hg concentration (bc,t).

None of the effect size estimates are zero, which means that we cannot assume any statistical independence between the variables. We confirmed these results using manual examination of scatter plots, which is outlined as supplementary material in Appendix E. Hereafter, we propose model m3 is the most plausible causal model for the variables of interest.

5.2.1 Detailed argumentation on why model m3 is most plausible

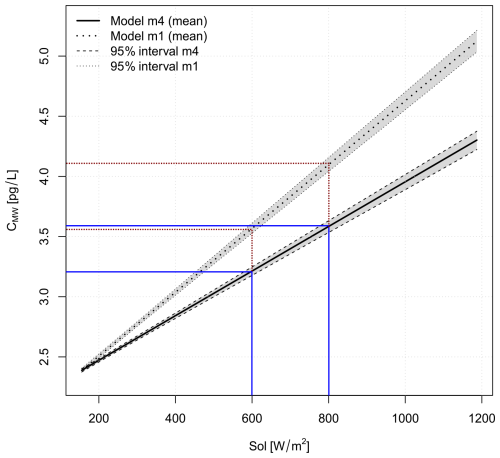

The parameter bc,s is the effect size between the standardised predictor variable Sol and CMW. It gives us an estimate of the strength of the association, and hence the assumed causal relationship, between solar radiation and measured Hg CMW. The estimated mean value for bc,s is 0.547 for model m1 and 0.360 for model m3. Model m2 has no estimate for bc,s because it assumed (incorrectly) a-priori that there is no causal relationship between Sol and CMW. Because we used standardised predictor variables, these effect size estimates imply that 1 standard deviation of solar radiation is associated with a change in CMW of 0.547 pg L−1 and, respectively for model m3, 0.360 pg L−1. Dividing the estimated effect size bc,s by the original standard deviation of the data for Sol gives the de-standardised effect size estimate :

Using the standard deviation of Sol given in Table 3, model m1 estimates that an increase of solar radiation by 1 W m−2 is associated with an average increase in CMW of pg L−1(W m−2)−1 = 0.00265 pg L−1(W m−2)−1 Model m3 estimates an increase in CMW of pg L−1(W m−2)−1 = 0.00175 pg L−1(W m−2)−1, which is 66 % of the estimate from m1. Assuming that there is a causal relationship between solar radiation and CMW, which model gives the correct estimate of the strength of the causal relationship between Sol and CMW?

5.2.2 Total effect and direct effect

In fact, both estimates of the strength of the causal relationship are correct. However, model m1 provides with bc,s the total effect. Model m3 provides instead the direct effect of Sol on CMW. If there is a causal relationship between Sol on CMW, there is also a “flow” of association between these variables. This “flow” of association can take different “routes” between the variables. In our case, solar radiation Sol has a direct effect on the concentrations of Hg (CMW), which means that we can measure an association between these two variables. Its effect size is estimated in our models as bc,s:

However, Sol also affects the sea surface temperature TS, which in turn affects CMW. Besides a direct effect, Sol also has an indirect effect on CMW, mediated by TS. The indirect effect of Sol on CMW can be calculated by multiplying the effect sizes for the paths Sol → TS and TS → CMW:

In summary, a part of the association between Sol and CMW “flows” via TS. The total effect of Sol on CMW is the sum of direct effect and indirect effect. This definition holds regardless of the sign of the individual path-specific effects: indirect effects with opposite signs represent competing causal mechanisms that (partially) can cancel each other out.

For model m3, the direct effect equals, on average, to bc,s = 0.360 and the indirect effect equals, on average, to = 0.445 ⋅ 0.420 = 0.187, as illustrated in Fig. 9b. Using Eq. (14), the total effect of Sol on CMW is then 0.360 + 0.187 = 0.547, which is exactly the estimate for bc,s that model m1 provided. By removing the path between TS and CMW, we do not allow a “flow” of association between TS and CMW in model m1, as illustrated in Fig. 9a. The association related to the indirect effect of Sol on CMW that is mediated by TS, therefore cannot flow through TS and is instead “rerouted” onto the direct path between Sol and CMW (red, wide dotted line in Fig. 9a). Consequently, the effect size bc,s, estimated by model m1, is the sum of the direct and indirect effects, thus in fact the total effect of Sol on CMW. Although individual mechanisms, such as the temperature dependence of Henry's law, may suggest opposing effects on equilibrium DGM, the causal model in this study is specified for measured mercury concentrations CMW. Empirically, the inferred effect bc,t of seawater temperature TS on CMW is positive in the observational data, which suggest that temperature-related processes in this measurement context are stronger than the opposing sub-mechanisms.

Figure 9DAGs with illustration of flow of association. Red, wide dotted line: association of solar radiation Sol on Hg concentration CMW, mediated by sea surface temperature TS. Blue, dense dotted line: association of Sol on CMW, not influenced by Sol. Green, mixed dotted line: association of TS on CMW. (a) Model m1 where the path TS → CMW is not allowed and the indirect effect of Sol on CMW is therefore “rerouted” on top of the direct effect of Sol on CMW. (b) Model m3 where the path TS → CMW is allowed and the indirect effect of Sol on CMW therefore can flow via TS.

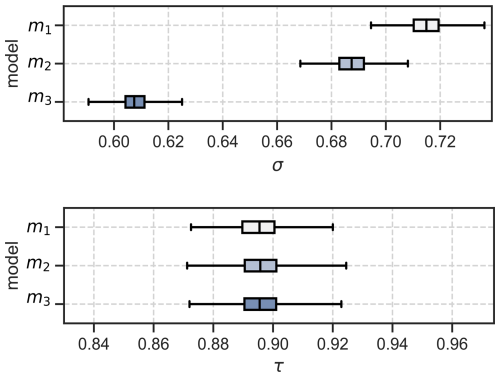

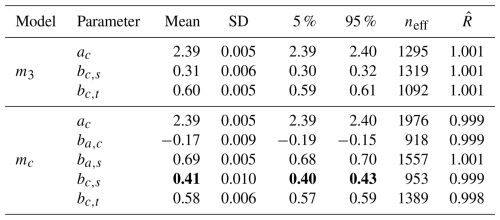

A similar line of argumentation can be provided for the difference of the estimate of bc,t between model m2 and model m3. Because the path Sol → CMW is not allowed in model m2, the association related to the direct effect of Sol on CMW is “rerouted” on the path Sol → TS → CMW. This results in an overestimate of the direct effect bc,t of TS on CMW. Due to the misspecification of models m1 and m2, these two models result in a higher standard deviation σ for the CMW data as shown in Fig. 10 because the models cannot correctly map all association between Sol, TS, and CMW. However, the standard deviation τ for the relation Sol → TS is identical for all models, because the path between Sol and TS exists in all models. Table 4 summarises the estimated effect sizes for model m3.

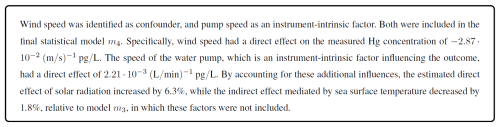

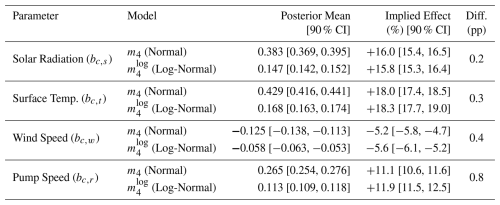

5.2.3 Adding estimates for the effect of external influencing factors in model m4

After having identified the most plausible “core” model, we extended it by adding estimates of external influencing factors. Wind, denoted as W, is an environmental factor that could affect the measurements of CMW. The speed of the water pump, rW, however, is a instrument-intrinsic factor that could have effect on the measurements for CMW. In line with other predictor variables, we chose identical priors for W and rW based on a prior predictive check. The results of the estimated effected sizes on CMW of the updated model m4 are presented in Table 4, while all parameter estimates are listed in Appendix F. The average effect size of wind W on surface temperature TS, denoted as bt,w is −0.24. This agrees with our expectations that an increase in wind speed results in a lowering of the sea surface temperature. The estimated average effect size of W on Hg concentration CMW, denoted as bc,w is −0.13. The estimated average effect size of wind is smaller compared to5 the effect sizes of surface temperature bc,t = 0.43 and solar radiation bc,s = 0.38. As we expected after inspecting Fig. 6e, the water pump speed rW has a distinct effect on the measured Hg concentration CMW, with an estimated average effect size of bc,r = 0.27. An interesting observation can be made when estimating the effect sizes of the other environmental variables, Sol, TS, and W, on the water pump speed. The effect sizes of solar radiation and sea surface temperature on the water pump speed are small with average effect sizes of br,s = − 0.04 and br,t = − 0.09 respectively. But the influence of wind speed on the water pump speed is strong with an average effect size of br,w = 0.27. All inferred effect sizes are listed in Table F1 in Appendix F. A possible explanation for the strong relationship between wind speed and water pump speed is that due to high winds, sea waves could have cleared the inlet of the pump from algae, resulting in a higher flow speed. Unlike model m3, where the indirect effect of solar radiation on CMW is only mediated by the sea surface temperature TS, model m4 allows for two additional mediation paths: Sol → rW → CMW, and Sol → TS → rW → CMW. However, the latter mediation path contributes only very weakly to the total indirect effect due to the small effect of surface temperature on pump speed (br,t). In contrast to the effect of solar radiation on pump speed (br,s), which is also relatively small, the credibility interval of the effect size br,t, listed in Table F1, contains zero. We therefore cannot exclude the possibility that the effect of sea surface temperature on pump speed is practically negligible and consequently do not interpret this compound path as a substantively important part of the total indirect effect. In summary, the inclusion of the confounding external factor wind W and instrument-intrinsic factor water pump speed rW lead to a noticeable increase in the estimated effect size of solar radiation Sol on measured Hg concentration CMW by 6.3 % compared to the estimate of model m3.

Table 4Estimates of the direct, indirect, and total effects based on observed data in April 2020 without (model m3) and with (model m4) recognition of wind and pump speed as external influences. Bold values are mean values; standard deviations are in parentheses; 90 % confidence intervals are depicted in square brackets.

* The additional compound mediation path Sol → TS → rW → CMW () is practically negligible due to the small effect br,t which includes zero in its 90 % credibility interval (see Table F1).

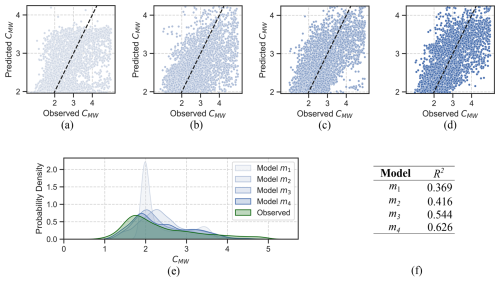

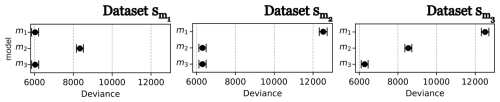

5.3 Results from model validation on observed data

We have already established that model m3 and its extension with external influences to model m4 are most plausible. Furthermore, all models are workable meaning that it is computationally possible to fit the models to the data provided. Evidence for this claim is that the R2 values for all parameters in all models are close to 1 indicating stable posterior distributions as listed in Table F1 in Appendix F. Further evidence of the workability of the models are the effective sample sizes neff which indicate that the samples contain sufficient information to infer the model parameters. The overall sample size was n = 6149. The smallest effective sample size occurred for parameter br,t of model m4 with neff = 811 which is more than the recommended ratio of at least 10 % of the total sample size. We did not receive any numerical warnings during the training of the models. Finally, we also checked that the models are adequate by comparing the posterior predictive plots with the empirical data as shown in Fig. 11a–d. The closer the points in these plots are to the black diagonal line, the better a model can predict the outcome variable. It is obvious that model m3 and m4 seem most adequate to predict CMW. This is further confirmed by plotting the posterior distribution densities, as shown in Fig. 11e. The posterior density of CMW in this plot is closest to the observed data density for model m4. We have also calculated the R2-values, shown in Fig. 11f), using the mean of the posterior predictions for CMW for all models. Model m3 has an R2 value of 0.544 and model m4 has the highest R2 value of all models with a value of 0.626, which indicates a moderate to good fit to the data. It is important to remember that model m3 only accounts for solar radiation, temperature changes and the interaction between these two external variables, and model m4 adds the additional influences of wind and variation of the water speed pump. The models ignore any additional external factors that might influence CMW. It is therefore a rather simple model of CMW, but yet the fit to the data is quite acceptable.

Figure 11Posterior predictive plot for models (a) m1, (b) m2, (c) m3, and (d) m4. The dotted lines indicate where the posterior prediction and the sample match exactly. Panel (e) provides the posterior distribution density for all models. Panel (f) provides the coefficients of determination (R2 values) for all models.

Finally, we calculated the WAIC information criterion which allows us to make a relative comparison of the statistical models. Figure 12 suggests that model m4 has the lowest deviance and is therefore the most adequate model. A lower deviance calculated by WAIC indicates a smaller statistical distance between the estimated posterior distribution of the model and the probability distribution of the observed data which we have also checked manually using the posterior distribution density plots in Fig. 11e.

When using statistical or ML methods to analyse observational data, researchers need to distinguish between predictive and analytical models. In a predictive model, the aim is to use the data to train a model that predicts future trends in an outcome of interest. Any observed variable that adds predictive power reduces the uncertainty of the outcome, and these variables are typically strongly associated with the outcome of interest. ML algorithms therefore require large amounts of data to find as many associations in the data as possible. However, purely predictive models are black boxes in terms of which features, or variables, in the data contribute to the outcome and to what extent. A warning example of how adding any variable that adds predictive power can lead to erroneous conclusions on effect sizes is given in Appendix D.

Besides predicting future trends, environmental research also seeks understanding of why an outcome occurs and to quantify the effects that determine them. Therefore, researchers need to move away from purely predictive models, which work only with associations in the data. Instead, they should refocus on analytical models, which provide estimates of the strength of different cause–effect relationships and help answer why-questions. Unfortunately, in most cases, it is not possible to estimate cause–effect relationships from observational data alone. In other fields, such as medicine or social science, researchers can conduct randomised controlled trials (RCTs) to determine the strength of causal relationships. Because RCTs are typically infeasible in environmental research due to the impossibility of isolating a “control” Earth system, alternative strategies must be found.

Here, we demonstrated how prior domain knowledge can be encoded as graphical causal models, providing:

-

Constraints in terms of the directions of cause and effect based on prior knowledge of researchers;

-

A basis for estimating effect sizes from observational data;

-

Independence conditions that enable model validation and provide transparency of assumptions.

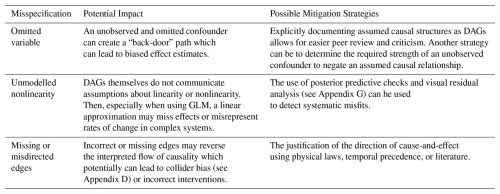

6.1 What causal inference adds beyond experiments and field observations

The causal framework in this study did not aim to discover previously unknown physical processes governing the formation of gaseous mercury in the oceans. Instead, the contribution lies in quantifying how known processes jointly contribute to observed variability under observational conditions outside of a laboratory. Specifically, using the suggested causal framework, it is possible to (i) separate total observed association between solar radiation and measured mercury into direct and temperature-mediated components, (ii) quantify the relative importance of these causal pathways, and (iii) adjust effect estimates for confounding influences such as environmental influences and instrument-intrinsic factors that are difficult to control in field observations. While laboratory and field experiments showed that solar radiation and sea surface temperature influence mercury emissions, the proposed causal framework allows these effects to be estimated simultaneously from observational data under explicitly and transparently stated causal assumptions. This causal inference technique therefore provides effect size estimates that are directly interpretable for large-scale modelling efforts or policy assessments, where controlled experiments may be infeasible. Causal conclusions, however, are conditional on the assumed causal models. DAGs, as graphical representations of causal knowledge, make prior causal knowledge explicit which allows other researchers to understand and criticise more easily the underlying assumptions. Such criticism is important because causal models are not immune to misspecification, such as by omitting unobserved but relevant confounders, leaving out, or misdirecting edges, which may lead to biased effect estimates. Table 5 lists a set of possible misspecifications and their mitigation strategies.

In the following, we revisit the research questions and summarise and discuss the answers we found and their implications for the mercury research community. Then, based on the findings and learnings from the case study, we discuss the use of causal inference in combination with prior knowledge in terms of its applicability to environmental research in general.

Answers to research questions

By applying the causal inference framework suggested in this paper, we found that solar radiation influences measured Hg concentrations in seawater both directly, and indirectly through its effect on sea surface temperature. We verified that the final model m4, although being a simple model of the cause-effect relationships for Hg concentration in seawater, can reconstruct the probability density of the observed data adequately well with an R2 value of 0.626. After adjusting for additional external influences (RQ2), we identified the following effect sizes: